You’ve used it to write an email, plan a trip, or simply ask something you didn’t want to google. It answered instantly, in full sentences, like it knew exactly what you meant.

It sounds like a fully thought-through response. But AI doesn’t actually think. It’s not a brain or a search engine. It’s something most people aren’t even aware of.

You’ve Already Been Using It, You Just Didn’t Know

Before the chatbot era, AI was already quietly embedded in products people use every day. For example, the spam filter keeping junk out of your inbox, Netflix deciding what shows up on your homepage, Google Maps rerouting you around traffic in real time. or even Spotify building a playlist it thinks you’ll like.

None of those felt like “AI.” They just felt like the product working on its own. That’s still what most AI actually is: invisible infrastructure making decisions in the background. The chatbot is just the first time it talked back.

A Set of Instructions, Not a Mind

An AI model is a set of instructions built from patterns across a massive amount of text: articles, books, forums, research papers, and more. Not everything though. Paywalled content, private data, and anything published after its training cutoff is typically off the table. It learned from what it had access to, not everything that exists.

Yet, it doesn’t hold onto it, it just learns from it. The same way you don’t remember every book you’ve ever read, but they shaped how you think.

When you ask it something, it isn’t searching through everything it was trained on. It’s predicting, based on those learned patterns, what a coherent response looks like. Word by word.

Under the Hood: How It Actually Generates a Response

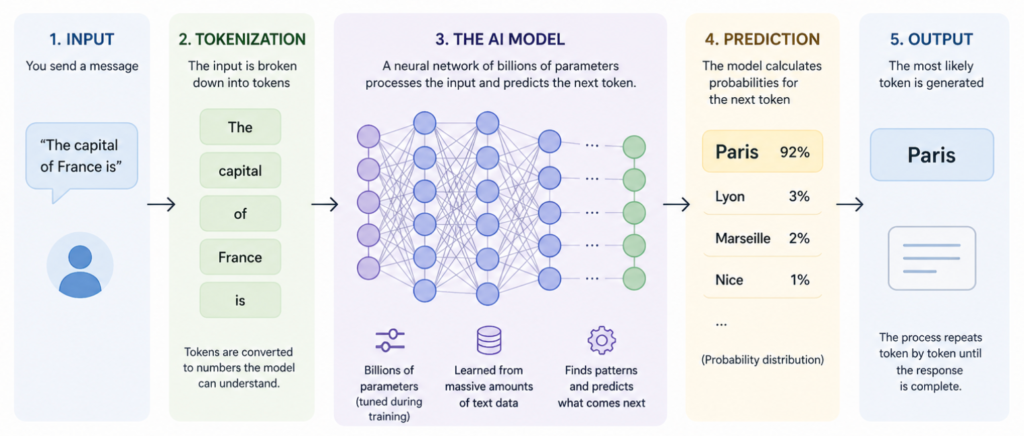

Most people imagine the model as a vast database it searches through to find an answer. That’s not what happens. Here’s a simplified version of what’s actually going on:

The model breaks your message down into tokens, chunks of text that can be as short as a single letter or as long as a full word. “Unhappy” might become [“Un”, “happy”]. Each token gets converted into a number, and those numbers are fed into layers of mathematical operations.

Those layers (called a neural network) were shaped during training. Think of them as millions of dials, each one calibrated slightly based on patterns the model saw across billions of examples. When you send a message, the current settings of all those dials work together to calculate one thing: what token is most likely to come next.

Then it picks that token, appends it to what’s been written so far, and asks the same question again. And again. Until it decides the response is complete.

For Example, you type: “The capital of France is”

The model doesn’t look up the answer. It calculates that, given every pattern it’s seen in training, the next token is overwhelmingly likely to be “Paris.” It outputs “Paris,” not because it knows geography, but because “Paris” follows that phrase more reliably than anything else.

Scale that process across a trillion parameters and billions of training examples, and you get something that looks, very convincingly, like “understanding”. This is what makes modern AI genuinely impressive, and genuinely limited. The scale is incomprehensible. The underlying process is still just very sophisticated pattern-matching.

Training vs. Using It

There are two completely separate things happening with AI that most people usually treat as one:

Training is building the kitchen. It happens once, expensive, slow, requiring enormous amounts of data and computing power. Think of it like a library spending years cataloguing every book ever written, organizing every idea, cross-referencing everything.

By the time it’s done, the patterns are baked into the instructions. This is why some AI platforms are better for certain tasks than others, having been built around specific instructions.

Inference is cooking the meal. It’s what happens every time you send a message and get a response. This is where most people assume they’re “training” the AI, feeding it context, insights, etc. But the kitchen is already built. The structure to take what you’re giving it and use it to better frame what it already learned is already there. You’re just using it.

Why It Gets Things Wrong With Total Confidence

This is the part worth understanding ~ Because the model is predicting rather than verifying, it can produce something that sounds completely authoritative and is completely wrong. The industry calls this hallucination. It’s not lying. It doesn’t know it’s wrong, and that’s the structural limitation nobody talks about enough.

The model doesn’t know what it doesn’t know. It has no internal signal for uncertainty. It’s just as confident when it’s wrong as when it’s right. That’s not a bug they forgot to fix. That’s how it was built.

Think of it like a student who read every textbook they could get their hands on but never took a test. They can talk about anything confidently. That doesn’t mean they’re always right.

The Companies That Moved Too Fast, and Paid for It

In the past few years we’ve seen a wave of companies making dramatic moves: cutting customer service teams, reducing editorial staff, shrinking QA and support roles, with AI positioned as the replacement. The narrative was efficiency, yet the reality turned out to be a lot more complicated.

- Salesforce CEO Marc Benioff confirmed the company cut its customer support headcount from 9,000 to 5,000 in 2025 after AI agents took over a growing share of service interactions. This quickly turned into regret, with the company running into challenges with managing the models and reliability issues.

- Duolingo reduced its contractor base significantly in early 2024, citing AI as part of the rationale. The backlash, from users, creators, and press, was swift. The quality gap between human-generated and AI-generated content became visible in ways the company hadn’t anticipated.

These weren’t failures of the technology in isolation. They were failures of expectation. The companies treated AI as a straight replacement for human judgment, rather than a tool that augments it. And when the limitations showed up, hallucinations in customer responses, inconsistency in output quality, an inability to handle anything genuinely novel, there was no human layer left to catch it.

The costs weren’t just operational. Rebuilding trust with customers, rehiring and retraining staff, and repairing brand damage added up in ways that didn’t show up on the original efficiency calculation.

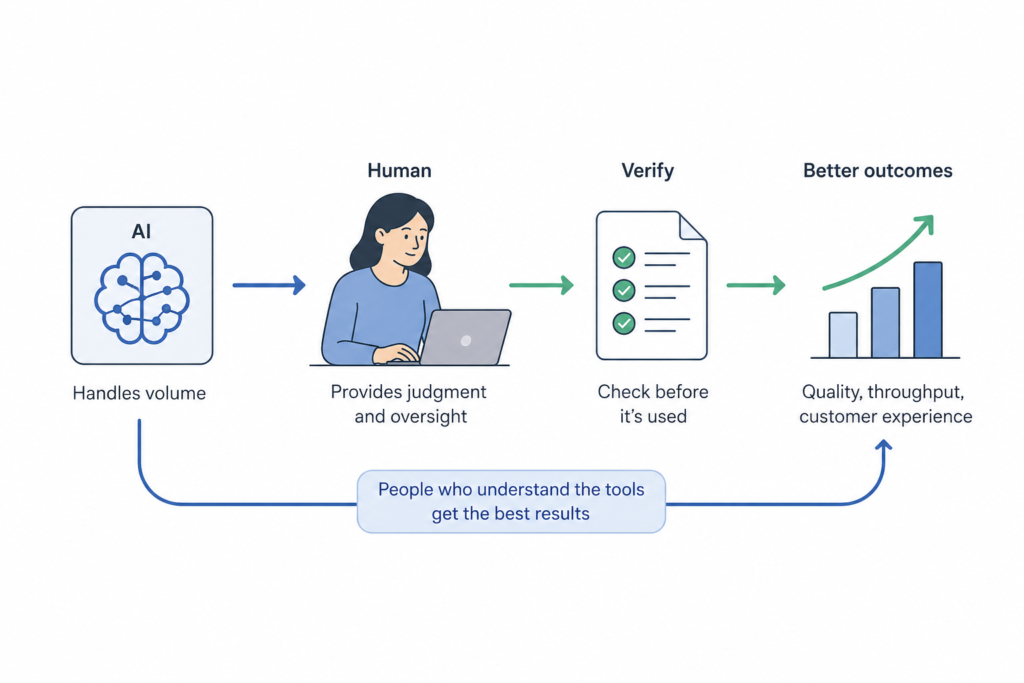

The lesson isn’t that AI doesn’t work. It’s that it works differently than most organisations assume. Prediction engines don’t replace judgment, they scale it.

What Proper AI Adoption Actually Looks Like

The companies getting the most out of AI right now aren’t the ones who replaced the most people.

They’re the ones who are clearest about what they’re actually asking AI to do and making sure the people using it actually understand the models they’re working with.

A few patterns that separate the ones getting it right:

- AI handles volume, humans handle judgment. AI drafts the first response, a human reviews anything with real consequence. The speed gain is real. The risk is managed.

- Verification is built in, not bolted on. Any output that influences a real decision, a customer email, a financial summary, a legal document, goes through a human check before it leaves the building. Not because the AI is usually wrong, but because when it is wrong, you want to catch it.

- The people using the tools understand the tools. Teams that know AI predicts rather than verifies use it differently. They push it harder on the tasks it’s good at, and they don’t trust it on the tasks it isn’t.

- Gains are measured on outcomes, not headcount. The question isn’t “how many roles did we reduce?” it’s “did quality improve, did throughput increase, did customers have a better experience?” Those are the metrics that hold up.

What This Means for You

AI models are exceptional at synthesizing information, drafting language, explaining concepts, and working through structured problems.

They’re less reliable for verified facts, real-time information, or anything where being wrong has real consequences.

The practical version: use what the model produces as a first draft or a brainstorming tool. Not a final answer.

Quick Summary

The people who built these models think of them as statistical systems. Most people using them think of something closer to intelligence. Those two mental models produce very different expectations.

A model is a set of instructions, built from patterns, designed to predict what a useful response looks like. Impressive because the scale is genuinely incomprehensible. Limited because predicting is not the same as knowing.

If you’ve made it this far ~ thanks for sticking around.

Disclaimer, this is just my perspective shaped by years of building and running marketing across multiple industries. What have I learned? This translation gap between infra and communication applies everywhere! And is something I’m determined to help solve.

I’d love to hear your take. Whether it’s a different pov, a question, or something this article reminded you of. Find me on X or Linkedin. Growing together beats going at it alone.